publications

2024

-

Leveraging Machine-Generated Rationales to Facilitate Social Meaning Detection in ConversationsRitam Dutt, Zhen Wu, Kelly Shi, and 3 more authors2024

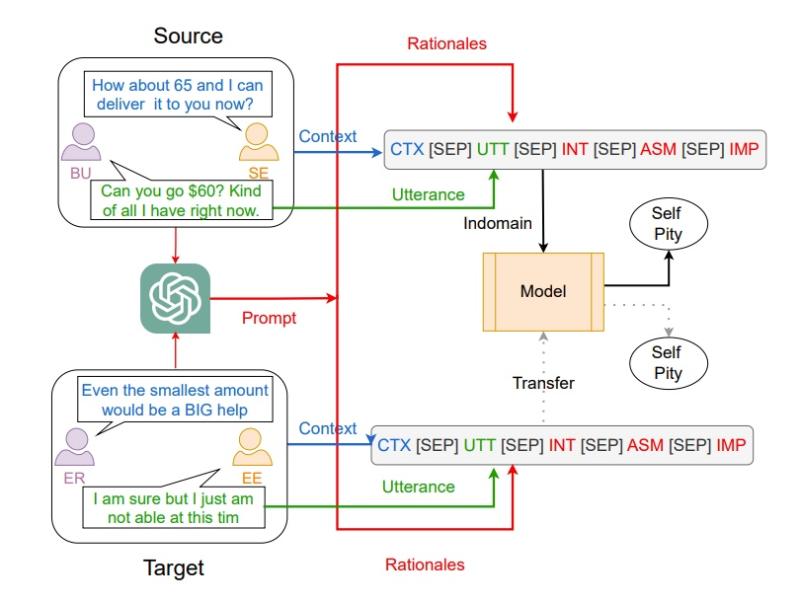

Leveraging Machine-Generated Rationales to Facilitate Social Meaning Detection in ConversationsRitam Dutt, Zhen Wu, Kelly Shi, and 3 more authors2024We present a generalizable classification approach that leverages Large Language Models (LLMs) to facilitate the detection of implicitly encoded social meaning in conversations. We design a multi-faceted prompt to extract a textual explanation of the reasoning that connects visible cues to underlying social meanings. These extracted explanations or rationales serve as augmentations to the conversational text to facilitate dialogue understanding and transfer. Our empirical results over 2,340 experimental settings demonstrate the significant positive impact of adding these rationales. Our findings hold true for in-domain classification, zero-shot, and few-shot domain transfer for two different social meaning detection tasks, each spanning two different corpora.

-

CodeBenchGen: Creating Scalable Execution-based Code Generation BenchmarksYiqing Xie, Alex Xie, Divyanshu Sheth, and 3 more authors2024

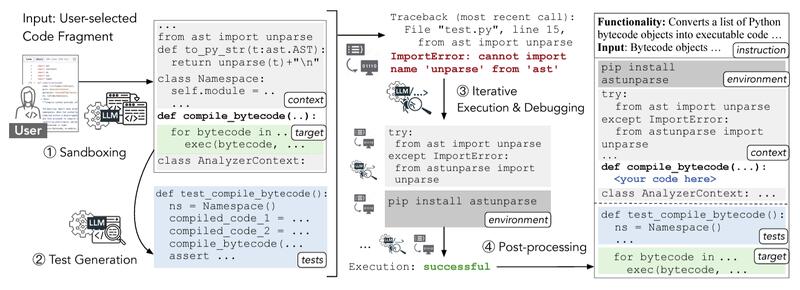

CodeBenchGen: Creating Scalable Execution-based Code Generation BenchmarksYiqing Xie, Alex Xie, Divyanshu Sheth, and 3 more authors2024To facilitate evaluation of code generation systems across diverse scenarios, we present CodeBenchGen, a framework to create scalable execution-based benchmarks that only requires light guidance from humans. Specifically, we leverage a large language model (LLM) to convert an arbitrary piece of code into an evaluation example, including test cases for execution-based evaluation. We illustrate the usefulness of our framework by creating a dataset, Exec-CSN, which includes 1,931 examples involving 293 libraries revised from code in 367 GitHub repositories taken from the CodeSearchNet dataset. To demonstrate the complexity and solvability of examples in Exec-CSN, we present a human study demonstrating that 81.3% of the examples can be solved by humans and 61% are rated as “requires effort to solve”. We conduct code generation experiments on open-source and proprietary models and analyze the performance of both humans and models. We will release the code of both the framework and the dataset upon acceptance.

2023

-

Rationale-Guided Few-Shot Classification to Detect Abusive LanguagePunyajoy Saha, Divyanshu Sheth, Kushal Kedia, and 2 more authors2023

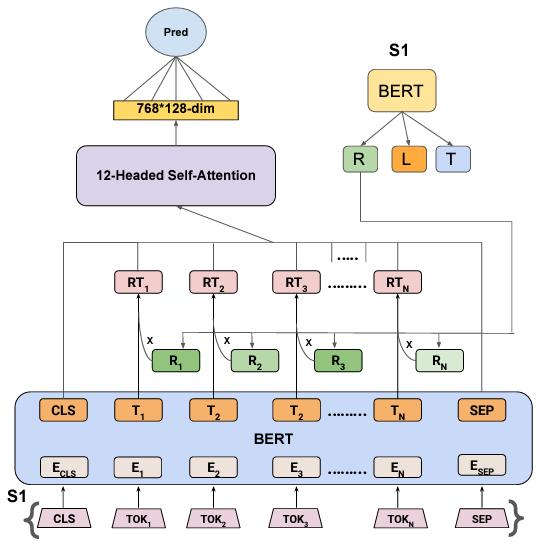

Rationale-Guided Few-Shot Classification to Detect Abusive LanguagePunyajoy Saha, Divyanshu Sheth, Kushal Kedia, and 2 more authors2023Abusive language is a concerning problem in online social media. Past research on detecting abusive language covers different platforms, languages, demographies, etc. However, models trained using these datasets do not perform well in cross-domain evaluation settings. To overcome this, a common strategy is to use a few samples from the target domain to train models to get better performance in that domain (cross-domain few-shot training). However, this might cause the models to overfit the artefacts of those samples. A compelling solution could be to guide the models toward rationales, i.e., spans of text that justify the text’s label. This method has been found to improve model performance in the in-domain setting across various NLP tasks. In this paper, we propose RGFS (Rationale-Guided Few-Shot Classification) for abusive language detection. We first build a multitask learning setup to jointly learn rationales, targets, and labels, and find a significant improvement of 6% macro F1 on the rationale detection task over training solely rationale classifiers. We introduce two rationale-integrated BERT-based architectures (the RGFS models) and evaluate our systems over five different abusive language datasets, finding that in the few-shot classification setting, RGFS-based models outperform baseline models by about 7% in macro F1 scores and perform competitively to models finetuned on other source domains. Furthermore, RGFS-based models outperform LIME/SHAP-based approaches in terms of plausibility and are close in performance in terms of faithfulness.

-

Can Language Models Evaluate like Humans? Leveraging Large Language Models for Few-Shot and Many-Shot Dialog Systems EvaluationDivyanshu Sheth2023

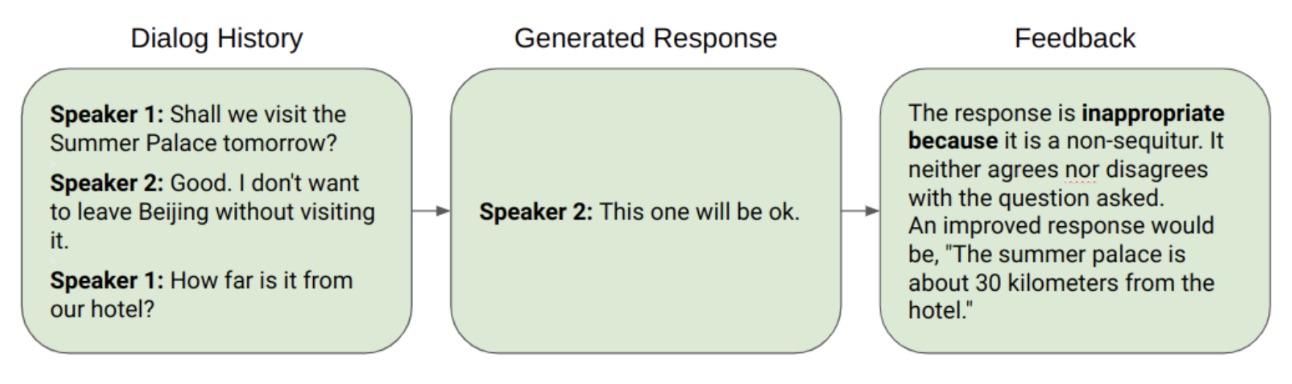

Can Language Models Evaluate like Humans? Leveraging Large Language Models for Few-Shot and Many-Shot Dialog Systems EvaluationDivyanshu Sheth2023We experiment with dialog evaluation techniques involving large language models such as GPT and T5. With there being quite a few challenges in automated dialog system evaluation, not limited to non-applicability of otherwise standard metrics for natural language evaluation such as BLEU and ROUGE, we hope to discover the capabilities of previously unused large language models, including 100B+ parameter models such as GPT-3 for few-shot dialog evaluation and feedback generation, and GPT-2 and FLAN-T5 in many-shot settings. We discover that these models can beat the state-of-the-art approaches in dialog response evaluation on many metrics, while falling behind on some other metrics. We conduct analyses of their performances in several settings and lay out future plans for the next stage of experiments and analyses to be conducted.

2022

-

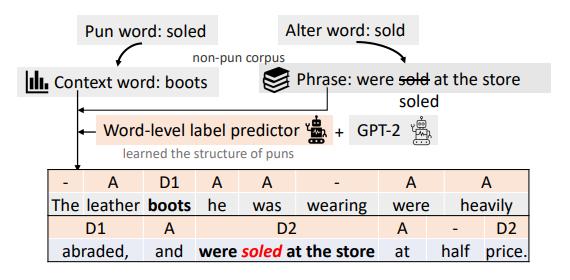

A Unified Framework for Pun Generation with Humor PrinciplesYufei Tian, Divyanshu Sheth, and Nanyun PengIn Findings of the Association for Computational Linguistics: EMNLP 2022, Dec 2022

A Unified Framework for Pun Generation with Humor PrinciplesYufei Tian, Divyanshu Sheth, and Nanyun PengIn Findings of the Association for Computational Linguistics: EMNLP 2022, Dec 2022We propose a unified framework to generate both homophonic and homographic puns to resolve the split-up in existing works. Specifically, we incorporate three linguistic attributes of puns to the language models: ambiguity, distinctiveness, and surprise. Our framework consists of three parts: 1) a context words/phrases selector to promote the aforementioned attributes, 2) a generation model trained on non-pun sentences to incorporate the context words/phrases into the generation output, and 3) a label predictor that learns the structure of puns which is used to steer the generation model at inference time. Evaluation results on both pun types demonstrate the efficacy of our model over strong baselines.

2021

-

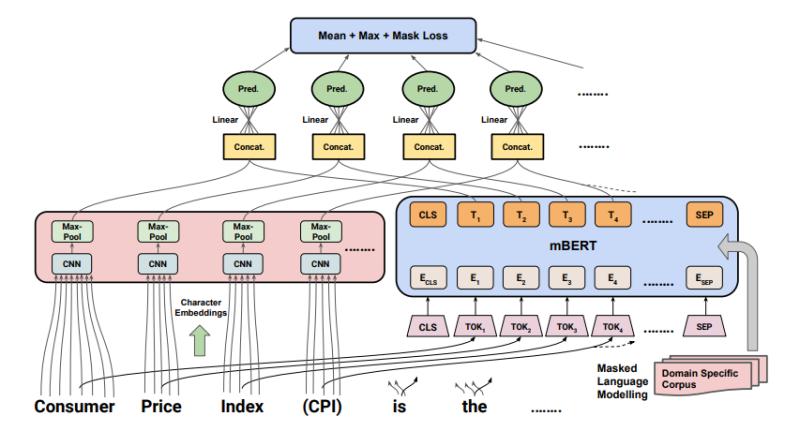

CABACE: Injecting Character Sequence Information and Domain Knowledge for Enhanced Acronym and Long-Form ExtractionNithish Kannen, Divyanshu Sheth, Abhranil Chandra, and 1 more authorDec 2021

CABACE: Injecting Character Sequence Information and Domain Knowledge for Enhanced Acronym and Long-Form ExtractionNithish Kannen, Divyanshu Sheth, Abhranil Chandra, and 1 more authorDec 2021Acronyms and long-forms are commonly found in research documents, more so in documents from scientific and legal domains. Many acronyms used in such documents are domain-specific and are very rarely found in normal text corpora. Owing to this, transformer-based NLP models often detect OOV (Out of Vocabulary) for acronym tokens, especially for non-English languages, and their performance suffers while linking acronyms to their long forms during extraction. Moreover, pretrained transformer models like BERT are not specialized to handle scientific and legal documents. With these points being the overarching motivation behind this work, we propose a novel framework CABACE: Character-Aware BERT for ACronym Extraction, which takes into account character sequences in text and is adapted to scientific and legal domains by masked language modelling. We further use an objective with an augmented loss function, adding the max loss and mask loss terms to the standard cross-entropy loss for training CABACE. We further leverage pseudo labelling and adversarial data generation to improve the generalizability of the framework. Experimental results prove the superiority of the proposed framework in comparison to various baselines. Additionally, we show that the proposed framework is better suited than baseline models for zero-shot generalization to non-English languages, thus reinforcing the effectiveness of our approach. Our team BacKGProp secured the highest scores on the French dataset, second-highest on Danish and Vietnamese, and third-highest in the English-Legal dataset on the global leaderboard for the acronym extraction (AE) shared task at SDU AAAI-22.